Designed for someone else

Somewhere in the Netherlands, right now, someone is sitting in front of a screen trying to log into DigiD, the government authentication app. They have their phone. They have, probably, a piece of paper with a password written on it, because remembering passwords is not something their brain does well. The app has updated since the last time they used it. The button is in a different place. The sequence of steps is slightly different. A notification is asking them to enable something they don't understand. They can’t proceed without making a choice. They close the app and call their daughter, or their neighbour, or the library.

This person is not rare. They are not an edge case. They are one of a very large number of people in this country for whom the digital layer of public life is a wall rather than a door. And the wall is getting higher every year.

It's tempting to say these are people "with disabilities". People with intellectual disabilities, cognitive impairments, dementia, brain injuries, low literacy, or simply the kind of brain that doesn't take naturally to interfaces. But disability is the wrong frame, because if we say that, we’re making it the person’s problem. If we call them disabled, we never have to ask where the problem came from in the first place: who decides what abilities you're supposed to have in order to participate in ordinary life? And why do the requirements keep going up?

Technology gets more complicated over time, because more is possible. But more possibilities also imply more choices to be made.

Thirty years ago, renewing a prescription meant walking to the pharmacy. Fifteen years ago, it meant a phone call. Today it means an app, a login, a code sent to your email, a series of screens that assume you can read quickly, follow a flow, recover from an error, and understand what words like "authenticate" mean. None of those assumptions are neutral. Each one is a small exam you have to pass to simply get your medicine.

The people writing these exams are not cruel. They are, for the most part, thoughtful designers and engineers trying to build things that work. Things that are safe, and solid. The problem isn’t cruelty, it’s optimality.

Every significant digital product today is shaped by experimentation: Two versions of a screen are shown to different users. Whichever version produces better numbers — more completions, more clicks, more conversions, less time to task — wins. The losing version is discarded. The winning version becomes the new standard, and the next experiment runs against it. This happens continuously, invisibly, at enormous scale. It is how nearly every app you use got to be the way it is.

This process has a blind spot, and the blind spot is the whole reason I'm writing this blog.

The experiments measure the average. What wins is whatever works best across the broad middle of users. If a design change makes things faster for most people and impossible for some people, it ships. Because the "most" outweighs the "some" in the numbers. And the people for whom it is now impossible have no way to register that fact. The only channel for feedback is the interface itself, and the interface is precisely what they cannot use. They don't file a bug report. They don't fill out a survey. They close the app, or they never opened it. In the data they look identical to someone who simply wasn't interested. Their absence gets read as disinterest. The developers move on to the next round of optimization aimed somewhere else.

An exaggerated sketch of the process at play: Improving the design by repeated experiments makes the product better for some, but at the cost of excluding the others.

What does that mean? It means that a quiet, continuous process is tuning public life into a digital environment that was designed to fit a smaller and smaller group of people. And the people outside that group are being silenced by exactly these tools: the tools that exclude them. They cannot complain about an app inside the app. They cannot email the municipality from a form they can't fill in. The helpline has a menu tree. The goalposts keep moving and nobody announced the game.

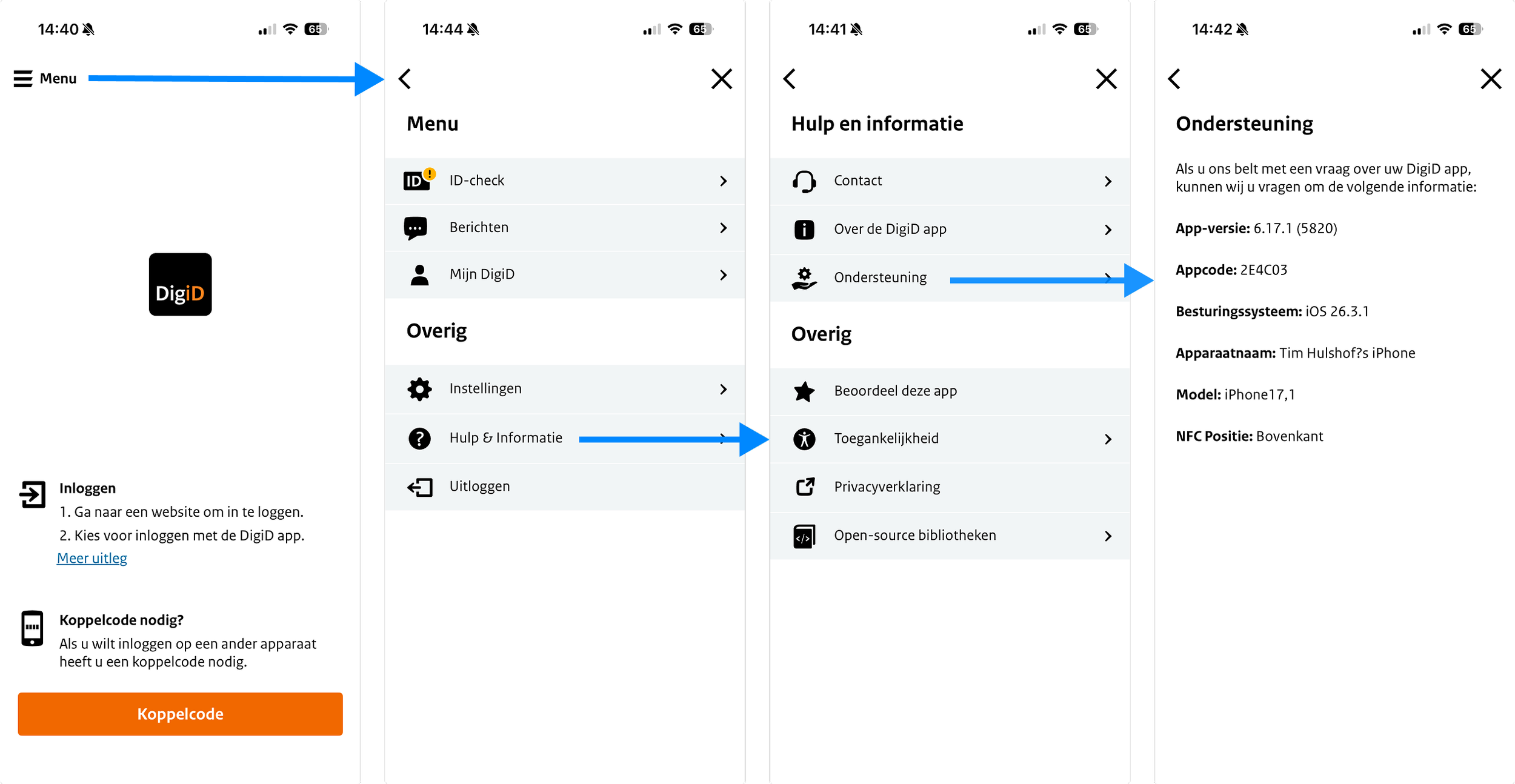

If you want help, following through the logical flow in DigiD app is not going to solve your problems…

This would be bad enough if we were talking about entertainment apps, or shopping. But we are not. DigiD is not a consumer product. Neither is the tax office portal, the municipal waste app, the health insurance site, the patient portal at the hospital, the appointment system at the GP, the pension overview, the public transport planner. These are the load-bearing structures of ordinary adult life. And increasingly they are the only way to do the things they do. The paper form is gone. The counter is closed. The phone line routes you back to the website.

This is not an accessibility problem in the narrow sense that the word has come to mean: screen readers, colour contrast, keyboard navigation. Those matter, and there are laws about them, and there are people who work hard on them. That’s a great thing. But cognitive accessibility has almost no legal scaffolding, almost no standard practice, almost no one whose job it is to defend it inside a product team. It is the accessibility problem with no advocates in the room when the decisions are made.

So here is what this blog is going to be about.

It's going to be about the people being quietly filtered out of public digital life, and what we owe them. It's going to be about the design patterns that exclude them, often without anyone noticing. It's going to be about how statistical optimisation, applied over and over again, produces a regression to the mean that has real human costs. And it's going to be about what good design for cognitive accessibility actually looks like, because this is not only a critique. There are better ways to build these things, and I want to write about those too.

I am a mathematician by training and a data scientist and software engineer by trade. I work with designers, with public sector people, with healthcare organisations, and with people who spend their lives supporting adults who need more care and support than most. What I see from all those angles is the same thing: a growing gap between what the digital world assumes of people and what a large fraction of people can actually do. Nobody chose this gap. It is the accumulated result of a million small optimisations, each reasonable on its own terms.

That is exactly what makes it worth writing about. Problems caused by nobody in particular need a systemic change to fix, because there is no villain to defeat. Only a direction to reverse. I'd like to try.